Abyss of The AI Economy: Enter The Not So Brave New World

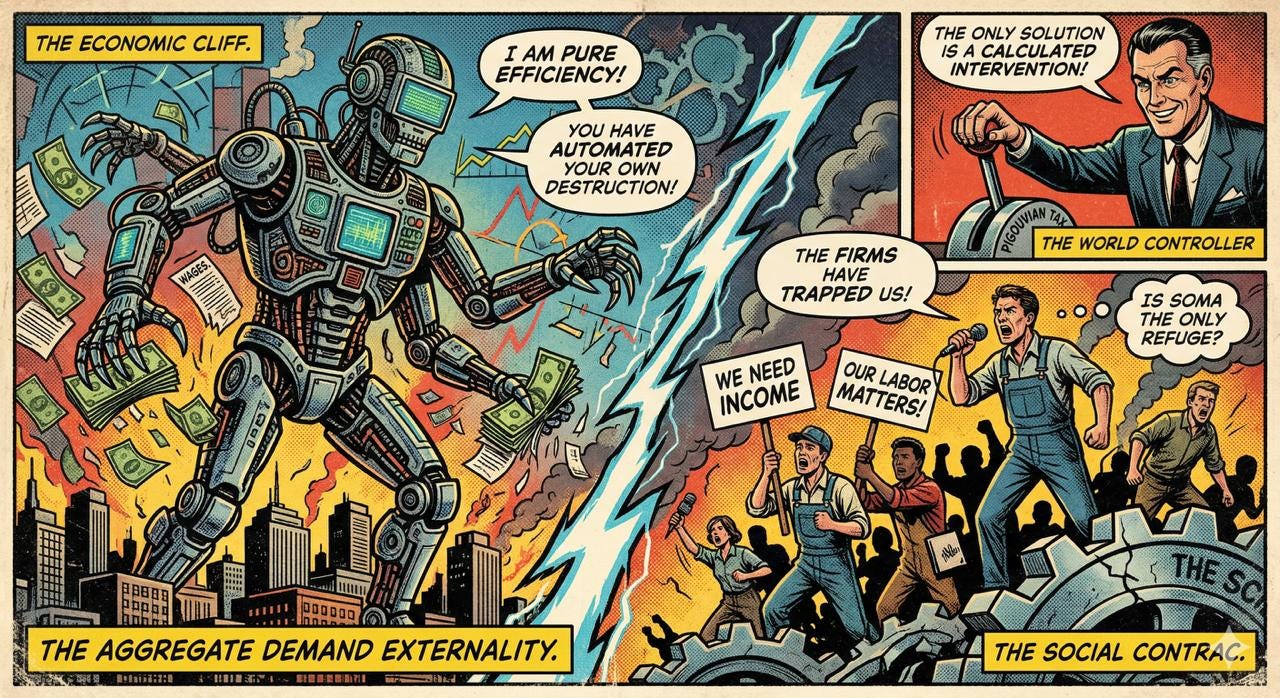

Automate to win, fire your customers, enjoy zero-demand utopia! Pigouvian tax to the rescue.

As an ardent economist, and a philosophy geek I’ve always had a thing for designing analogies between academic work and curious studies; recent paper of Falk and Tsoukalas, taking all over the internet, immediately echoed Huxley in my mind. Well, the convergence of Huxley’s Brave New World and the findings presented in “The AI Layoff Trap” appeared to me as a chilling synthesis: a world where technological efficiency and market competition inadvertently dismantle the social and economic foundations which they were actually built to serve. While Huxley’s 1932 dystopia warns of a society engineered for stability at the cost of humanity, Falk and Tsoukalas’s 2026 paper provides the mathematical framework for how a modern, competitive economy might “automate its way to boundless productivity and zero demand”.

The Automation Arms Race: A Modern Caste System

In Brave New World, stability is maintained through a rigid caste system (Alphas to Epsilons, actually), where every individual’s consumption and labor are perfectly calibrated. “The AI Layoff Trap” ,on the other hand, paints a picture of a hectic “automation arms race.” In this scenario, companies find themselves stuck in a competitive loop where they feel the need to automate to stay afloat, even though they understand that this collective move ultimately harms the consumer base, the workers (the guys who earn money and then spend money, capitalism right?), they depend on. This economic “Red Queen effect” is a bit like Huxley’s world, where technology isn’t a way to free us, but a way to control us and eventually stop moving forward. In the 2026 model, “better” AI doesn’t really fix the problem; it just makes things worse, like how the tech in Huxley’s London only makes the state’s sterile, demand-driven stability even stronger.

The Demand Externality: The Erosion of the “Savage” Market

The “AI Layoff Trap” hinges on something called the demand externality. Companies automate because they get all the savings from replacing a worker with AI, but they only have to deal with a small part of the overall drop in consumer demand. This sets up a bit of a “Prisoner’s Dilemma,” where smart, forward-thinking companies are all racing toward a financial cliff. Two things come up from this: Self-Destructive Productivity and The Consumption Trap. Self-Destructive Productivity happens when companies get super productive, but they run into a problem because the workers they replace, the main consumers (remember, capitalism!), don’t have enough money to buy things anymore. The Consumption Trap is a bit more intense. It’s like in Huxley’s world, where the idea is that “ending is better than mending.” High consumption is seen as a social obligation. The paper suggests that today’s companies are unintentionally breaking this obligation by taking away the tools (wages) that make it possible.

The Failure of Traditional Failsafes

Huxley’s world-state keeps things balanced with Soma and conditioning. Nowadays, economists sometimes suggest “reinstatement effects” (like creating new jobs) or Universal Basic Income (UBI) as ways to keep things steady. But the paper suggests that UBI and Transfers might not be as effective as we think. The authors show that while UBI could improve living standards, it doesn’t change the incentive to automate. This means the drive to automate too much continues. Plus, Market Adjustments: Unlike past technological changes that usually sorted themselves out, the “AI Layoff Trap” indicates that in highly competitive areas, even if we know what’s happening and adjust wages, we might still struggle to close the gap between automation and the workforce.

Conclusion: The Pigouvian Tax as the World Controller

The paper wraps up by suggesting that only a Pigouvian automation tax, set to match the uninternalized demand loss, can achieve a cooperative optimum. Think of this tax as a “World Controller,” a necessary central intervention to stop the competitive market from self-destructing. Huxley’s dystopian world came about through complete top-down design. The “AI Layoff Trap” highlights the risk of ending up in a similar sterile, human-less efficiency, not by design, but through the “strictly dominant” rational choices of firms in a market that has lost its human touch. Both the novel and the paper serve as a friendly reminder: without intervention, the “arms race” of efficiency could lead to a world where there’s plenty to buy, but no one left to buy it… And maybe, no one left to care (enter the dramatic music!).