The Silicon Motor: AI, Sam Altman, and the Return of the Objectivist Question

Will AI become Rearden Metal for unbound creativity, or a metered mind that erodes cognitive sovereignty? The new question echoes: Who controls the engine of the world?

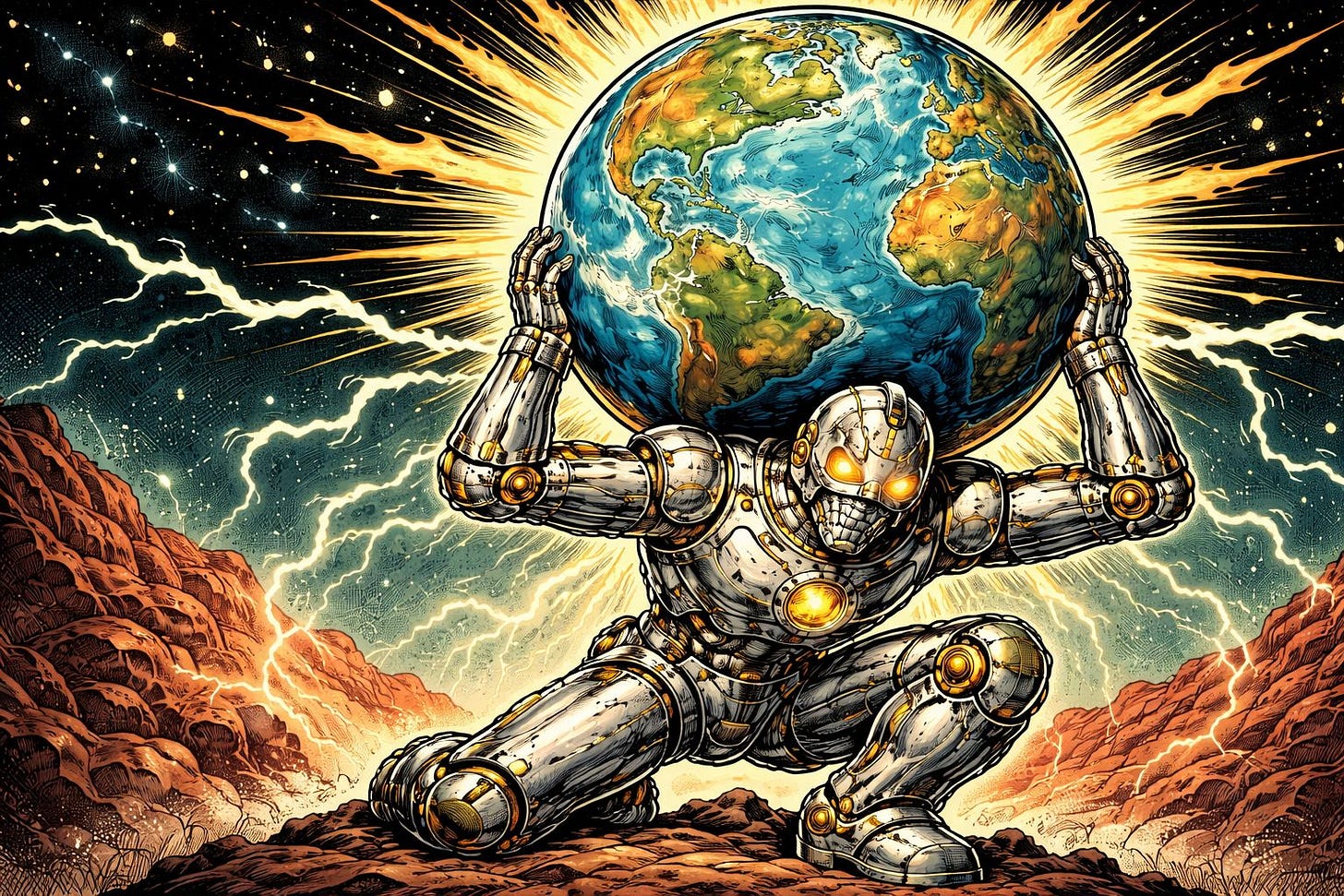

In the opening pages of Atlas Shrugged, the society presented by Rand is haunted by a curios question: “Who is John Galt?” Well, question is less a search for a person than a quiet recognition that something essential has disappeared. In her story, that missing element is actually the creative mind; the force she calls the “motor of the world.” Recently, as OpenAI CEO Sam Altman herald the coming “Intelligence Age,” a similar question emerges quietly again. The industrial age, at least as we understand it, extended human muscle through machines. Artificial intelligence, by contrast, extends something deeper: the human intellect itself. Still, this transformation raises a rather difficult question. In a world where intelligence flows like a mighty river, accessible to all, will it unleash the boundless creativity of the human spirit, or will it morph into a towering monolith, a dependency that shackles the soul?

The New Rearden Metal: Intelligence as a Utility

In his speech, Altman has suggested that intelligence could become a utility, as accessible and cheap as electricity. In an optimistic consideration (like a commercial optimism), this could mean a world where reasoning power, design capability, and problem-solving are available to anyone with access to technology. As Rand imagined, such a breakthrough would resemble Rearden Metal; a discovery that radically increases the efficiency of human life. Throughout the annals of time, history unveils that technological revolutions seldom confine themselves to the realm of mere technicality. In the grand saga of innovation, when a revolutionary technology emerges, mighty institutions rise with determination to regulate, manage, and occasionally wield control over its formidable power. Contemporary AI debates, calls for strict licensing, centralized oversight, or development pauses reflect this instinct. Some of these concerns are obviously understandable. Yet they also raise an uncomfortable possibility:that regulation might sometimes protect existing institutions from disruption rather than society from danger.

The Problem of the “Metered Mind”

Here, the deeper tension lies actually not in the AI itself, but in how it is delivered. If intelligence is provided mainly through centralized platforms; large corporations (like Open AI, DeepSeek, Anthropic, Gemini etc.) or maybe state-backed infrastructure; possibility of individual users dependence on systems they do not control may emerge. At this point, the metaphor indeed becomes very simple: if access to intelligence is metered, then the entity controlling the meter ultimately controls the flow, smart right… Returning to Rand’s fiction, governments seize factories; however, in a digital world, control might look a little bit different. It could mean restricting access to computational resources, limiting model capabilities, or simply turning off an API. The peril lies not in the realm of technology, but in the philosophical abyss where humanity risks relinquishing its cognitive sovereignty to enigmatic systems beyond their own mastery.

Automation and Intellectual Passivity

Another possible concern may also emerge at the cultural level. AI systems can now generate code (maybe reconsider majoring at Computer Science…), essays, images, and even policy proposals. If used well, and responsibly, these tools can expand human productivity. But if they are used passively, they may also encourage and enhance already omnipresent intellectual laziness. Now, Rand described a type of character she called the “second-hander” someone who relies on the thinking of others rather than engaging reality directly. A society that consumes AI-generated output without understanding the principles behind it risks drifting toward a similar condition. In a realm where the forge of knowledge blazes with relentless fervor, the tools of creation churn forth wisdom in abundance. Yet, as the tapestry of understanding unravels, only a select few wield the insight to decipher the arcane processes behind this intellectual alchemy. The result could be a strange paradox: greater technological power combined with weaker intellectual independence.

Decentralization and Cognitive Sovereignty

But there’s another way to think about it. Open Source AI and local computing could let us share intelligence instead of keeping it all in one place. Instead of depending on huge cloud systems, people and small groups could use strong models on their own. This is a bit like Rand’s “strike,” but in a whole new way. In a world where the digital realm reigns supreme, creators stand as the masters of their own destiny. They wield their tools with unparalleled precision, crafting masterpieces that echo through the corridors of time. With unwavering control over their intellectual output, they forge a legacy that transcends the boundaries of the physical world, leaving an indelible mark on the tapestry of human history. In the realm of cognitive sovereignty, individuals wield the power to think, design, and create with unshackled freedom, unbound by the chains of centralized authority. Here, AI transcends its role as a mere managed utility, transforming into a personal instrument of reasoning, empowering each mind to forge its own path of innovation and discovery.

The Choice Ahead

It seems like the future of AI will probably depend on how we balance these two ideas. One idea thinks of intelligence as a public utility, like electricity safe, consistent, and managed by a central authority. The other idea sees AI as a way for human creativity to spread out, easily accessible to everyone, and hard for any one group to control. Both ways have their pros and cons. But the big question that’s been around forever is: Will technology help people make their own choices, or will it slowly take over and make them rely on it? As we move into the Intelligence Age, the “engine of the world” might be starting up again, not in factories or trains, but in computers and programming. The thing that’s still up in the air is whether we’ll trust this new engine, or try to keep a close eye on how it runs.